AI can be genuinely useful in teaching—planning lessons, creating practice questions, generating examples, supporting feedback routines, helping students rehearse explanations. But the moment AI enters the classroom, it also brings predictable risks: privacy issues, hidden bias, over-reliance, unclear authorship, and “confidently wrong” content.

Ethical AI in education isn’t about fear or perfection. It’s about teacher control, student protection, and learning integrity—with simple routines you can apply every time.

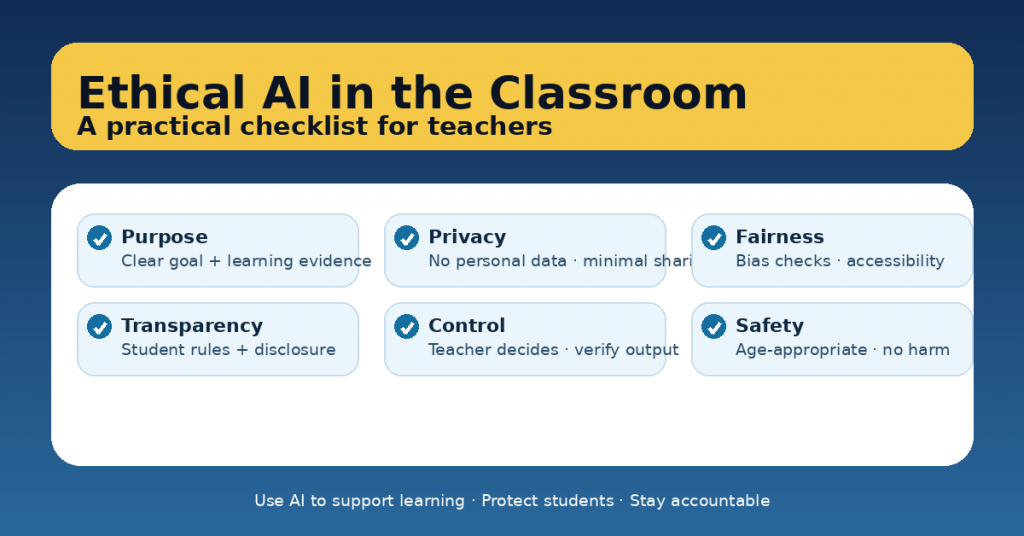

Below is a practical checklist you can use before, during, and after any AI-supported activity.

The Ethical AI Checklist (teacher-ready)

1) Purpose: Why are we using AI here?

-

☐ The learning goal is clear (not “try an AI tool”).

-

☐ AI supports the goal (it doesn’t replace the thinking).

-

☐ Students still produce evidence of learning (drafts, reasoning, oral explanation, process notes).

-

☐ The task has a “no-AI core” (what must remain human).

Quick test: If AI disappeared, would the lesson still make sense? If not, redesign.

2) Privacy: Are we protecting student data?

-

☐ No personal student information is entered (names, identifiers, health/needs, grades linked to identity).

-

☐ Student work is anonymized if used in prompts (remove identifying details).

-

☐ Data sharing is minimal (only what’s necessary to get useful output).

-

☐ You know where the data goes (school policy/platform rules).

Default: If you wouldn’t post it publicly, don’t paste it into a tool.

3) Fairness and inclusion: Does this widen gaps?

-

☐ Outputs are checked for bias and stereotypes (culture, gender, race, ability).

-

☐ Language level matches your learners (especially EAL/ELL students).

-

☐ Alternatives exist for students with limited access to devices or AI tools.

-

☐ The activity includes scaffolds (sentence starters, examples, vocabulary support).

Teacher move: Ask AI for three versions (standard / accessible / extension), then choose.

4) Accuracy: Are we verifying “confident nonsense”?

-

☐ Facts, examples, sources, and definitions are verified when they matter.

-

☐ You expect errors and model checking (not blind trust).

-

☐ Students know AI can be wrong—and how to challenge it.

-

☐ You avoid using AI as an “authority” in assessment or feedback.

Simple verification routine: Accuracy → Alignment → Equity.

5) Transparency: Are expectations and rules clear?

-

☐ Students know when AI is allowed, optional, or not allowed.

-

☐ Students know what counts as acceptable use (brainstorming vs. writing the final answer).

-

☐ Students disclose their AI use (short, simple, non-punitive).

-

☐ Parents/guardians are informed if required by school policy.

A good disclosure line for students:

“AI helped me with ___; I changed ___; my final work is mine.”

6) Student agency: Are students still learning to think?

-

☐ Students must explain why they chose ideas or answers (not just copy).

-

☐ The task requires reasoning traces (steps, justification, reflection).

-

☐ AI is used to practice, not to perform (coaching, not replacing).

-

☐ You teach “prompting as thinking” (good questions, constraints, checking).

Red flag: If students can submit AI output with no understanding, the task needs redesign.

7) Assessment integrity: Are you assessing the right thing?

-

☐ The assessment measures student understanding, not tool skill.

-

☐ There is a no-AI assessment component when needed (in-class writing, oral defense, quick quiz).

-

☐ Rubrics focus on evidence (reasoning, structure, accuracy), not just polish.

-

☐ You do not outsource grading decisions to AI.

Practical approach: Use AI for practice and feedback loops, not for final judgement.

8) Safety and appropriateness: Is it age-appropriate and non-harmful?

-

☐ Prompts avoid sensitive topics unless planned and supported.

-

☐ Outputs are screened for unsafe content, misinformation, or inappropriate tone.

-

☐ Students know what to do if AI produces harmful or uncomfortable content.

-

☐ Classroom norms protect respectful dialogue and wellbeing.

Teacher rule: You’re responsible for the learning environment—AI doesn’t change that.

9) Teacher control: Who is accountable?

-

☐ You keep the final decision on materials, feedback, and evaluation.

-

☐ You use AI to generate options, then curate.

-

☐ You document what worked and adjust (mini-retro).

-

☐ You can explain the rationale for using AI in this lesson.

Ethical bottom line: The teacher remains accountable for quality and impact.

A one-paragraph “ethical prompt” you can copy/paste

Use this as a wrapper around any request to an AI tool: