Students are using AI. Some will use it well. Some will use it to shortcut. And most will use it inconsistently—because they’re experimenting, anxious about grades, or unsure what’s allowed.

The wrong response is panic: bans that don’t hold, “AI detectors” that aren’t reliable, and assessment designs that assume students won’t have access to powerful tools.

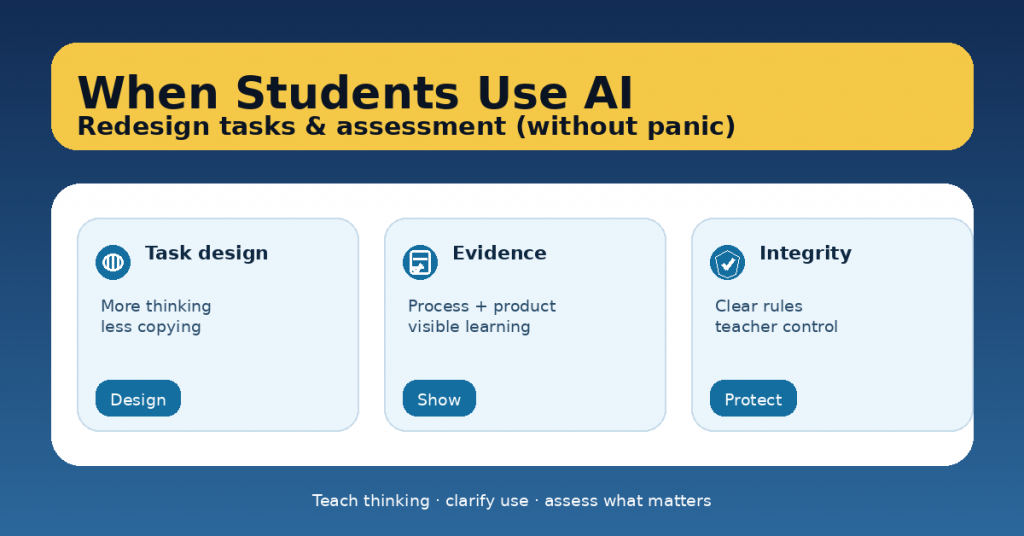

The better response is redesign: clarify expectations, build tasks that require thinking, and assess learning through evidence that AI can’t fake easily without understanding.

This article offers a practical approach you can apply this term—without turning your classroom into a policing system.

1) Start with a calm truth: this is an assessment design problem

When AI can generate fluent text, any task that rewards:

-

generic answers,

-

“explain the topic” prompts,

-

shallow summaries,

-

long final products with no process…

…will be vulnerable. That’s not a student morality crisis. It’s a signal to shift toward:

-

reasoning over wording

-

process over polish

-

evidence over output

2) Decide your AI stance (and communicate it clearly)

Students need simple rules, not ambiguity. Choose one of these classroom policies per task:

Option A: No-AI (for specific purposes)

Use when you are assessing individual baseline skills (e.g., in-class writing, timed practice).

-

Clear boundary: “No AI for this task.”

-

Provide support: models, scaffolds, checklists.

Option B: AI-assisted (recommended default)

AI is allowed for defined parts of the workflow:

-

brainstorming,

-

outlining,

-

generating practice questions,

-

feedback suggestions.

But not allowed for:

-

final answer without attribution,

-

replacing required reasoning.

Option C: AI-integrated (advanced)

Students use AI intentionally and are assessed on:

-

how they prompt,

-

how they verify,

-

how they improve the output,

-

and what they learn from the process.

One sentence you can post on the wall:

“AI can support your work, but it can’t replace your thinking.”

3) Redesign tasks so thinking is unavoidable

Here are the highest-leverage redesign moves.

A) Add local context (make it “yours”)

Generic prompts invite generic AI outputs. Make tasks anchored in:

-

your class discussion,

-

a local case study,

-

a shared text/data set,

-

your school/community context.

Example:

Instead of “Write an essay about climate change,” use:

“Using our class data from the school energy audit, propose two actions and justify them with evidence.”

B) Require decision-making + justification

AI can suggest options. But students must choose and defend.

Prompt upgrade:

-

“Choose the best option and justify why it fits this scenario.”

-

“Compare two approaches and argue for one.”

-

“Explain trade-offs and constraints.”

Assessment focus: quality of reasoning, not length.

C) Build in “micro-evidence” checkpoints

Don’t wait for a final submission. Collect evidence along the way:

-

a claim + 2 reasons (exit ticket),

-

annotated sources,

-

a draft paragraph with margin notes,

-

a short oral explanation,

-

a worked example.

This makes learning visible and reduces last-minute outsourcing.

D) Add a lived component: oral defense or in-class synthesis

You don’t need long viva exams. A 2–3 minute check is enough.

Options:

-

“Explain your argument in 60 seconds.”

-

“Walk me through your steps.”

-

“Why did you choose this example?”

-

“What would you change if…?”

Students who used AI well can still succeed—because they understand.

E) Use “show your work” artifacts

Require one or two of these:

-

drafts (before/after),

-

revision notes,

-

reflection (“What changed and why?”),

-

source trail,

-

process screenshots (optional),

-

peer feedback notes.

This shifts the incentive from hiding AI use to learning from it.

4) Redesign assessment: what you grade changes everything

If your rubric rewards polish, students will outsource polish. If your rubric rewards evidence and thinking, AI becomes less disruptive.

A rubric structure that works well

Use 3–5 criteria such as:

-

Understanding and accuracy

-

Reasoning and justification

-

Use of evidence

-

Clarity of communication

-

Reflection and improvement

Add one simple rule:

“If reasoning is missing, the work cannot score above ___.”

5) Teach “AI literacy” as part of the task (not a separate lecture)

If you allow AI, students need three skills:

Skill 1: Prompt with constraints

-

audience, length, level,

-

required concepts,

-

format,

-

what not to do.

Skill 2: Verify and challenge

-

“What might be wrong here?”

-

“Show assumptions.”

-

“Give counterexamples.”

Skill 3: Improve, don’t paste

-

rewrite in their own structure,

-

add class-specific evidence,

-

make choices explicit.

Class norm:

“Use AI like a coach, not like a ghostwriter.”

6) What NOT to rely on

AI detectors

They are unreliable, especially with multilingual students and evolving models. Use them cautiously, if at all, and don’t base high-stakes decisions on them alone.

Gotcha policing

If students feel you’re trying to trap them, they’ll hide more. Transparency and process evidence work better than suspicion.

7) A practical “tomorrow-ready” toolkit

If you want a quick reset without redesigning everything at once:

-

Add a process checkpoint (one draft + one reflection)

-

Add a short oral explanation (60 seconds)

-

Shift rubric weight toward reasoning + evidence

-

Publish a simple AI policy for the task (No-AI / AI-assisted / AI-integrated)

That’s it. Small changes, big effect.